The PDF is here to stay. In today’s work environment, the PDF became ubiquitous as a digital replacement for paper and holds a variety of important business data.

But what are the options if you want to extract data from PDF documents? Manually rekeying PDF data is often the first reflex, but fails most of the time for various reasons.

In this article, we talk about PDF data extraction solutions (PDF Parser) and how to eliminate manual data entry from your workflow

Extract Data From PDF Documents

Automate menial data entry tasks with Docparser.

No credit card required.

How to extract data from a PDF

Manually re-keying data from a handful of PDF documents

Let’s be honest. If you only have a couple of PDF documents, the fastest route to success can be manual copy & paste. The process is simple: Open every document, select the text you want to extract, copy & paste to where you need the data.

Even when you want to extract table data, selecting the table with your mouse pointer and pasting the data into Excel will give you decent results in many cases. You can also use Tabula’s free tool to extract table data from PDF files. Tabula will return a spreadsheet file which you probably need to post-process manually. Tabula does not include OCR engines, but it’s a good starting point if you deal with native PDF files (not scans).

Outsourcing manual data entry

Outsourcing data entry is a huge business. There are thousands of data entry providers out there you can hire. To offer fast and cheap services, those companies employ armies of data entry clerks in low-income countries that do the heavy lifting. Data entry providers also use advanced technology to speed up the process; the overall workflow is, however, basically the same as the one described above: opening every single document, selecting the right text area, and putting the data inside a database or a spreadsheet.

Outsourcing manual data entry comes with a lot of overhead. Finding the right provider, agreeing on terms, and explaining your specific use-case only makes economic sense if you need to process high volumes of documents. And still, it’s likely much more efficient to let our automated scan to database software do the job we do with our email parser or PDF Docparser.

How do I automate PDF data extraction?

Automated PDF data extraction solutions come in different flavors, ranging from simple OCR tools to enterprise-ready document processing and workflow automation platforms. Most systems share, however, a similar workflow:

- Assemble batches of samples documents which acts as training data

- Train the system for each type of document you want to process

- Set up a process to automatically fetch documents, process them and dispatch the data

Most advanced solutions use different techniques to train the data extraction system. A simple method is, for example, Zonal OCR where the user simply defines specific locations inside the document with a point & click system. More advanced techniques are based on regular expressions and pattern recognition.

After the initial training period, document data extraction systems offer a fast, reliable, and secure solution to convert PDF documents into structured data automatically. Especially when dealing with many documents of the same type (Invoices, Purchase Orders, Shipping Notes, …), using a PDF Parser is a viable solution.

Why is it challenging to extract data from PDF files?

There are several reasons why extracting data from PDF can be challenging, ranging from technical issues to practical workflow obstacles.

For starters, a lot of PDF files are scanned images. While those documents are easily readable for humans, computers cannot understand the scanned image text without first applying a method called Optical Character Recognition (OCR).

Once your documents containing text data (not just images) go through an OCR PDF Scanner, it’s possible to copy and paste parts of the text manually. This method is tedious, error-prone, and not scalable. Opening each PDF document individually, locating the text you need, and then selecting and copying it to another software takes way too much time.

The case for extracting data from PDF documents

Since the PDF was first introduced in the early 1990s, the Portable Document Format (PDF) saw tremendous adoption rates and become omnipresent in today’s workplaces. PDF files are the go-to solution for exchanging business data internally and with trading partners. Some popular use-cases for PDF documents in fields like supply chain, procurement, and business administration are:

- Invoices

- Purchase Orders

- Shipping Notes

- Reports

- Presentations

- Price & Product Lists

- HR Forms

- And more.

All document types mentioned above have one thing in common: They all are used to transfer essential business data from point A to point B.

So far, so good. However, there’s a catch–PDF is just a replacement for paper.

In other words, data stored in PDF documents is nearly as accessible as data written on a piece of paper. However, this becomes a problem whenever you need to access the data conveniently stored inside your documents. Which raises, for example, the question of how to extract data from PDF to Excel files?

The default reflex is to manually rekey data from PDF files or perform a copy & paste. However, manual data entry is a tedious, error-prone, and costly method and should be avoided. Below, we present different approaches to extracting data from a PDF file. But first, let’s dive into why PDF data extraction can be a challenging tasks.

Why is it challenging to extract data from PDF files?

There are several reasons why extracting data from PDF can be challenging, ranging from technical issues to practical workflow obstacles.

For starters, a lot of PDF files are scanned images. While those documents are easily readable for humans, computers cannot understand the scanned image text without first applying a method called Optical Character Recognition (OCR).

Once your documents containing text data (not just images) go through an OCR PDF Scanner, it’s possible to copy and paste parts of the text manually. This method is tedious, error-prone, and not scalable. Opening each PDF document individually, locating the text you need, and then selecting and copying it to another software takes way too much time.

Does my business need this data?

Data collection, extraction, and analysis are critical in a company. It can attract new customers, retain existing ones, and save your company time and resources. However, more important than the task of data extraction is the quality of the overall process. Data quickly and accurately automate mundane tasks, eliminate mistakes, and improve locating documents and managing extracted information.

That’s why it’s crucial to choose the right company to help you extract your data efficiently.

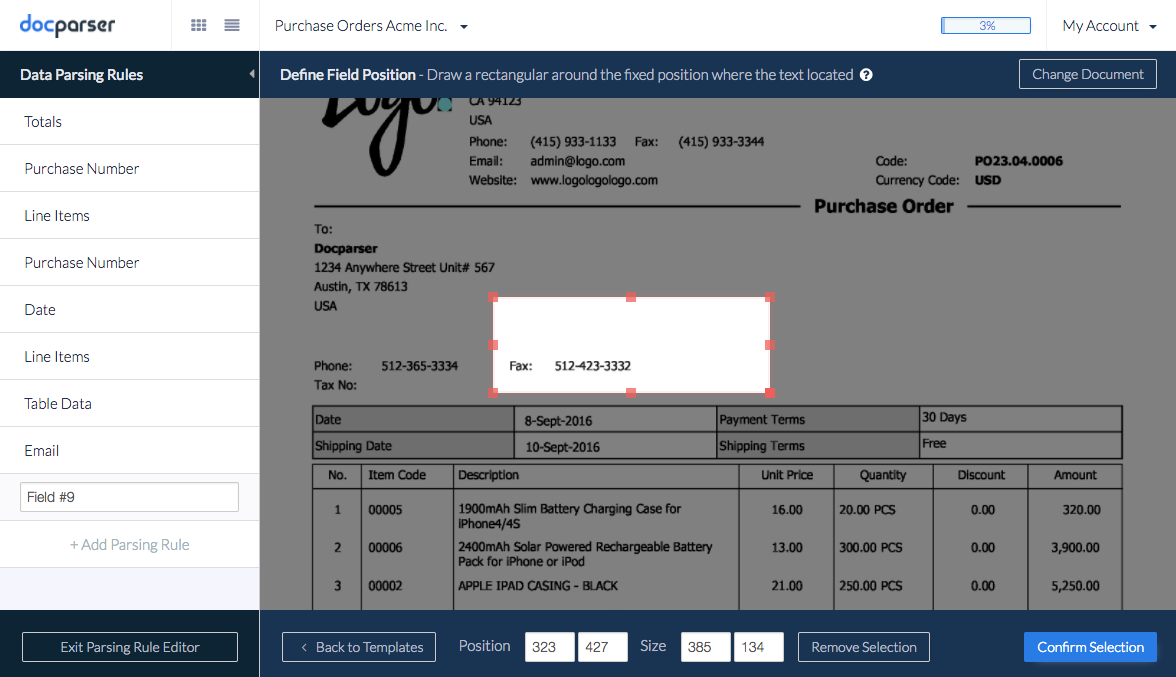

At Docparser, we offer a powerful yet easy-to-use set of tools to extract data from PDF files. Our solution was designed for the modern cloud stack, and you can automatically fetch documents from various sources, extract specific data fields, and dispatch the parsed data in real-time.

Look at our screencast below, which gives you a good idea of how Docparser works.

In the screencast, we introduce:

- What is Docparser

- Free trials

- Document creations

- How to upload samples

- Generating parsing rules for each data field (Spoiler alert: our presets make this easy)

But let’s look more closely at the importance of data extraction and analysis.

What is data extraction?

Data extraction is the process of collecting or retrieving different data types from a variety of sources. Data extraction consolidates information, processes it, and refines the data to be stored in a centralized location.

“The best-run companies are data-driven, and this skill sets businesses apart from their competiton.” – Tomasz Tunguz

Why should I use a data extraction tool like Docparser?

Extracting data is inevitable in a company. At some point, you’re going to need to extract customer data from forms to upload it to a database. On the other hand, perhaps your company wants to consolidate a database or streamline internal processes by merging data sources from different departments. Either way, extracting data is important knowledge to have.

If done manually, extracting data is a tedious task. Most companies and organizations use an application like Docparser to take advantage of the tools to manage the process from start to finish. Docparser automates and breaks down the extraction process to use resources for other priorities.

The benefits of using a data extraction tool include:

- Control. Data extraction allows your company to extract and upload data to your database automatically. As a result, your data won’t fall prey to outdated applications or software. It’s your data, it’s protected, and it’s yours to use and organize.

- Sharing. You can control who has access to your data. Extraction allows you to share data in a standard format and gives you permission to include or exclude whoever you want.

- Agility. Growing pains, a common term used by any growing company. As companies grow, they need to adjust to working with different data types across separate systems. Data extraction consolidates the information into one centralized system to unify multiple data sets.

- Accuracy. Manual processes performed by humans increase opportunities for easy errors, and require time to enter, edit, and review large volumes of data. Data extraction automates these tedious processes and helps to reduce time and errors.

A Simple PDF Data Extraction Tool

Streamline Repetitive Data Entry Tasks with Docparser’s Automation

No credit card required.

What are the types of data extraction?

We’ve reviewed the benefits of data extraction, but how is it typically applied? The first step to using data extraction to your advantage is identifying areas that benefit from the process. Next, these types of data are commonly extracted:

- Bank statements. Bank statements are designed to be secure and challenging to identify or organize. The file names are usually random numbers so digitizing them consolidates them to one place. Also, bank statements contain important information, so you want document redundancy. Scanning and extracting data is vital to redundancy and protecting the data itself.

- Financial data. Along with bank statements, financial data can help you organize your business. From sales numbers, purchasing costs to competitor pricing, data helps companies track their performance, improve inefficiencies, and plan strategic plans to fix holes in their company.

- Customer data. This data helps businesses analyze and understand their customers. This includes information like names, phone numbers, email addresses, id numbers, purchase history, social media activity, web searches, and more. You can extract all of this information and use it to build a database.

- Performance data. This data includes information related to tasks or operations within a company. For example, it’s any information related to your company’s logistics like customer feedback or shipping costs.

After discovering your extraction needs, you’re ready to figure out how to extract the data and decide where you want or need to store it. Docparser allows you to automatically import documents from a specific folder to your cloud storage provider. Our app integrates seamlessly with Box, Google Drive, and Dropbox. If you’re familiar with cloud storage providers like OneDrive, then you’ll know how to use one of our integration platforms partners.

Integration platforms are great for copying and synchronizing data and documents between your chosen cloud application and automating tedious workflow tasks. Docparser can connect with:

All the platforms can import documents to Docparser and place the parsed data in any chosen location. So, importing documents from the cloud is easy if you have an account with one of the supported integration platforms.

Frequently Asked Questions (FAQs)

Does Docparser have page count limitations?

Probably our most asked question from our clients, our application was primarily designed for only 1-10 page long transactional documents like invoices, purchase orders, bank statements, etc. If your document has more than 30 pages, Docparser may not be the best fit for your business.

What is the file size limit?

Documents are limited to 20MB. Local upload speed affects how our fast server receives the file, but our recommendation for maximum file size is 8MB. Larger documents are likely to fail to import into our application otherwise.

Customers on our higher-tiered plans such as Business + and Enterprise have an increased upload size.

Does Docparser extract data from emails?

No. Docparser doesn’t have email extraction capabilities. However, you can use emails to import PDF files into Docpaser. For example, if you receive PDF files like invoices by email, you can upload those documents to Docparser.

We recommend our sister app, Mailparser.io. It’s an industry-approved leader in email parsing.

We hope you better picture the different options for extracting data from PDF documents. Please don’t hesitate to leave a comment or reach out to us by email.

Easily Extract Data from PDFs

Automate menial data entry tasks with Docparser.

No credit card required.