Optical Character Recognition (OCR) technology got better and better over the past decades thanks to more elaborated algorithms, more CPU power and advanced machine learning methods. Getting to OCR accuracy levels of 99% or higher is however still rather the exception and definitely not trivial to achieve.

At Docparser we learned how to improve OCR accuracy the hard way and spent weeks on fine-tuning our OCR engine. If you are in the midst of setting up an OCR solution and want to know how to increase the accuracy levels of your OCR engine, keep on reading…

In this article, we cover different techniques to improve OCR accuracy and share our takeaways from building a world-class OCR system for Docparser.

First, Let’s Define OCR Accuracy

When it comes to OCR accuracy, there are two ways of measuring how reliable OCR is:

- Accuracy on a character level

- Accuracy on a word level

In most cases, the accuracy in OCR technology is judged upon character level. How accurate an OCR software is on a character level depends on how often a character is recognized correctly versus how often a character is recognized incorrectly. An accuracy of 99% means that 1 out of 100 characters is uncertain. While an accuracy of 99.9% means that 1 out of 1000 characters is uncertain.

Measuring OCR accuracy is done by taking the output of an OCR run for an image and comparing it to the original version of the same text. You can then either count how many characters were detected correctly (character level accuracy), or count how many words were recognized correctly (word level accuracy).

To improve word level accuracy, most OCR engines make use of additional knowledge regarding the language used in a text. If the language of the text is known (e.g. English), the recognized words can be compared to a dictionary of all existing words (e.g. all words of in the English language corpus). Words containing uncertain characters can then be “fixed” by finding the word inside the dictionary with the highest similarity.

In this article we will focus on improving the accuracy on character level. The more accurate characters are recognized, the less “fixing” on a word level is required.

Bird’s-Eye View On OCR Accuracy

When it comes to improving OCR accuracy, you basically have two moving parts in the equation.

The Quality Of Your Source Image

If the quality of the original source image is good, i.e. if the human eyes can see the original source clearly, it will be possible to achieve good OCR results. But if the original source itself is not clear, then OCR results will most likely include errors. The better the quality of original source image, the easier it is to distinguish characters from the rest, the higher the accuracy of OCR will be.

The OCR Engine

An OCR engine is the software which actually tries to recognize text in whatever image is provided. There are various OCR engines available, ranging from free open source OCR engines to proprietary solutions with a hefty price tag.

While many OCR engines are using the same type of algorithms, each of them comes with its own strengths and weaknesses.OCR accuracy comparison is difficult and choosing the right OCR engine mostly depends on your specific use-case, the allocated budget and how it integrates into an existing system.

At the moment of writing it seems that Tesseract is considered the best open source OCR engine. The Tesseract OCR accuracy is fairly high out of the box and can be increased significantly with a well designed Tesseract image preprocessing pipeline. Furthermore, the Tesseract developer community sees a lot of activity these days and a new major version (Tesseract 4.0) is on its way. The accuracy of Tesseract can be increased significantly with the right Tesseract image preprocessing toolchain. When it comes to proprietary OCR engines, it seems that ABBYY FineReader takes the pole position.

How to Increase Accuracy With OCR Image Processing

Let’s assume you already settled on an OCR engine. This leaves us with one single moving part in the equation to improve accuracy of OCR: The quality of the source image.

As stated above, the better the quality of the original source image, the higher the accuracy of OCR will be.

But what means “image quality” in this case? Just think of it as “making it as easy as possible” for the OCR engine to distinguish a character from the background. Which means that we want to have

- sharp character borders

- high contrasts

- well aligned characters and

- as less pixel noise as possible.

Most engines come with built-in OCR image processing filters to automatically improve the quality of a text image. The problem with those built-in filters is that you might not be able to tweak them to match your use case.

In our experience, understanding every preprocessing step and tweaking the preprocessing parameters individually is key increase OCR performance.

So let’s dive into it … Here is how to improve accuracy of OCR results by preprocessing your images:

Good Quality Original Source

Yes, we are repeating this on purpose! The first basic step for having accurate OCR conversions is to ensure good quality source images. Make sure the original paper document is not damaged, wrinkled, discoloured or printed with low contrast ink. If the original source file has any of these, the output will not be very clear. So, use the cleanest and the most original source of the file to be converted.

For the sake of this article, let’s have a look at a sample image with not optimal quality. The sample image below clearly needs some preprocessing like binarization, deskewing and removal of scanning artefacts.

Scaling To The Right Size

Ensure that the images are scaled to the right size which usually is of at least 300 DPI (Dots Per Inch). Keeping DPI lower than 200 will give unclear and incomprehensible results while keeping the DPI above 600 will unnecessarily increase the size of the output file without improving the quality of the file. Thus, a DPI of 300 works best for this purpose.

Increase Contrast

Low contrast can result in poor OCR. Increase the contrast and density before carrying out the OCR process. This can be done in the scanning software itself or in any other image processing software. Increasing the contrast between the text/image and its background brings out more clarity in the output.

Binarize Image

This step converts a multicolored image (RGB) to a black and white image. There are several algorithms to convert a color image to a monochrome image, ranging from simple thresholding to more sophisticated zonal analysis.

Most OCR engines are internally working with monochrome images and do a color->monochrome conversion as one of the first steps. If you are in control of this preprocessing step and you do it right, chances are high that you get a higher OCR accuracy.

Another advantage of binarizing your images before sending them to your OCR engine is the reduced size of your images. The image below shows our original image from above as a binarized bitmap.

Remove Noise and Scanning Artefacts

Noise can drastically reduce the overall quality of the OCR process. It can be present in the background or foreground and can result from poor scanning or the poor original quality of the data.

Deskew

This may also be referred to as rotation. This means de-skewing the image to bring it in the right format and right shape. The text should appear horizontal and not tilted in any angle. If the image is skewed to any side, deskew it by rotating it clockwise or anti clockwise direction.

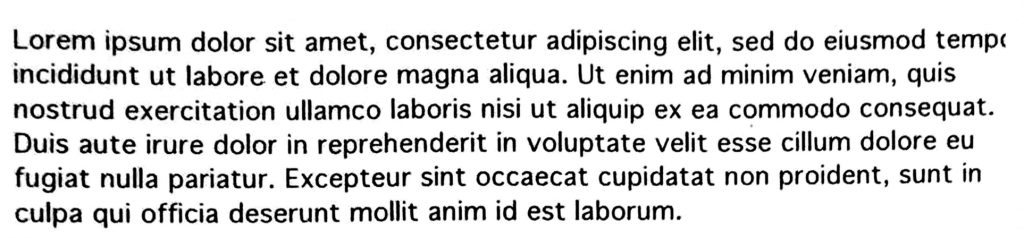

Below you’ll see the final image which we can send to the OCR engine. The image was binarized, de-skewed and scanning artefacts (black border) were removed with a tool called Unpaper (see further below).

Layout Analysis (or Zone Analysis)

In order to detect words correctly, it is important to first recognize the zones or the layout (which are also the areas of interest). This step detects the paragraphs, tables, columns, captions of the images etc. If the software misses out on any zone or layout, words might be cut in half or not detected at all.

Most OCR solutions come with a built-in layout analysis. You can however go a step further and apply Zonal OCR techniques to define exactly the part of the image holding the text you want to extract.

Open Source Tools You Can Use To Improve OCR Accuracy

So what are your options when you want to programmatically increase the quality of your source images? At Docparser, we recommend the following open source tools for image preprocessing for improving ocr accuracy:

- Leptonica – A general purpose image processing and image analysis library and command line tool. Leptonica is also the library used by Tesseract OCR to binarize images.

- OpenCV – An open source image processing library with bindings for C++, C, Python and Java. OpenCV was designed for computational efficiency and with a strong focus on real-time applications.

- ImageMagick – A general purpose image processing library and command line tool. A long list of command line options is available for any kind of image processing job.

- unpaper – The name says all. Unpaper is a postprocessing library specifically built for eliminating all “paper” related issues from a scanned document. If you want decent results without any tweaking, don’t look further and use Unpaper.

- Gimp – A powerful open source image editor which you can use to manually improve the quality of individual images.

What We Learned From Building The Docparser OCR Pipeline

When there is one thing we learned about OCR accuracy, it is that there is no silver-bullet and shortcut for OCR performance. Before you can optimize anything, you need to carefully inspect the documents you are given. Once you know the shortcomings of your documents, you can apply the preprocessing steps listed above accordingly to improve OCR accuracy.

Understanding the different preprocessing steps is key for creating a preprocessing pipeline tailored to the documents you want to process. This is why we choose to expose all preprocessing options in Docparser to our users. Our default settings cover most cases quite well, but each preprocessing step can be tweaked to match the type of documents a user wants to process.

Do you have any questions regarding OCR processing? We also have a sister product that is the leading email parsing solution on the market. Leave a comment below or contact us. We are eager to hear from you!

Also, don’t forget to create a free account with Docparser and check if our solution is maybe the answer to your document data extraction needs!